By Erik Kleinsmith

Associate Vice President, Intelligence Studies, National Security & Homeland Security

As an intelligence officer for an Army Infantry Battalion (Regulars by God!), the soldiers of my S2 shop put a sign on the door to our office in the Battalion Headquarters building. It read, “1-6 Infantry S2 – We do the thinking so you don’t have to.” While it was a tongue-in-cheek jab at our infantry brothers then, it has become almost prophetic in parts of our society today.

In a hubristic attempt to do our thinking for us, it appears today’s media and social media providers have declared war on misinformation. From Facebook’s misinformation policy, Twitter’s ever-evolving community standards, and the rise of fact checkers as a growing industry, there has been an increasing trend within our media and social media to identify and defeat posts, stories, videos, comments, and even memes that they have defined as misinformation. This persistent quest has such a high priority that misinformation has become the catchall term to encompass fake news, alternative facts, propaganda, and the like.

The problem is that many analysts, planners, decision makers, and even just rational thinkers require all kinds of information – accurate, misleading, factual, and even deceptive – to do the job properly. Not only must we continue to deal with misinformation, we now have to deal with the problems created by outside attempts to stifle that misinformation.

Defining Misinformation

Roughly defined as information that is inaccurate or false, misinformation can be unintentional or deliberate, and its consequences can range from a minor annoyance to a catastrophe. Misinformation, along with its more malicious subset, disinformation, has always been present in our everyday lives and alongside the regular information we rely upon it is growing at an exponential rate. As it is present in almost every aspect of society such as the intelligence and national security arenas, our ability to manage the impact of misinformation in our analysis and decision-making is a never-ending issue.

One of the main problems in dealing with misinformation is that there is already a lot of misinformation about what it actually is and how it affects us. This is especially true in the way it is defined or how it is placed within the context of our analysis and decision-making.

Misinformation as a Virus

For example, one of the most common ways to define misinformation is to describe it as a virus. Perhaps spurred on by many social media terms such as “going viral” for a popular meme or video, misinformation as a virus is something that is spread from an original source to infecting those who in turn infect others with their own social media posts and comments. According to this definition, those who are most susceptible are those who are uninformed or are already receptive to the false narrative or ideology the misinformation supports.

As a result, those who spread misinformation are seen as infected, unclean, unintelligent, and lacking in rational/cognitive capabilities in comparison to the more rational thinkers who agree with you. After all, how could anyone but a mindless zombie believe the garbage of (name your source)? They must have the dreaded infection of (name your ideology).

With this perspective, the only way to manage misinformation is to completely eradicate it or inoculate the population against it. To do this, we use methods such as fact-checking and labeling, or the more drastic measures of shadow banning a source or the outright censorship of content. To combat a virus or lies, these methods are increasingly seen as viable options, no matter how antithetical they are to the principles of free speech.

The rationale is that it is better to have infringements on our free speech than to allow this tripe to pass unchallenged. As I’ve covered in a previous article, many of these methods simply are not effective in overcoming the ability of a message getting out. And those being censored will work twice as hard to be heard – a human characteristic, not a viral one.

Understanding Misinformation in the Context of Intent

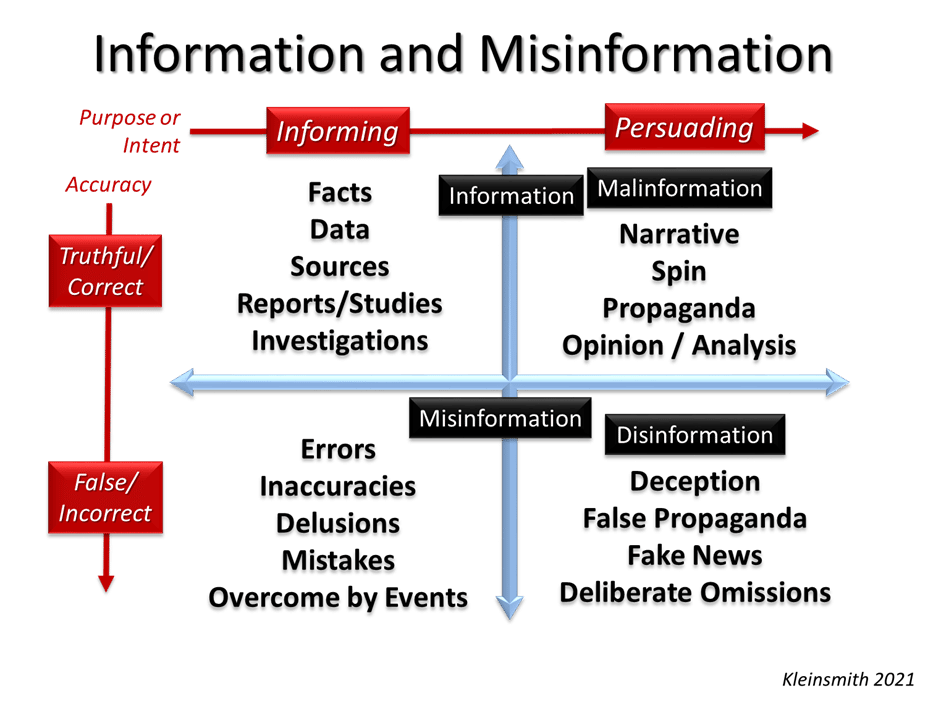

Perhaps the best way to understand misinformation is to look at it in the context of all its many different definitions. Online dictionaries and academic publications have many different definitions for misinformation, but most include the distinction between its purpose and intent. With that in mind, there is a way to map it out so that we can understand and analyze it more effectively:

In the diagram above, I have arranged information and misinformation in the context of its accuracy and the intent of the messenger. This give us four general and somewhat overlapping categories of information, each with its own characteristics. Instead of looking at misinformation as a virus, this perspective tells us we should instead realize that most misinformation is a natural occurrence. Our entire lives are spent learning and educating ourselves in order to identity, fix, and minimize the effects of errors and mistakes both personally and professionally.

Almost all misinformation has more to do with the perception of the recipient than with the facts themselves. Facts don’t have feelings but everyone else does. We always analyze facts within our own mental framework – our ideas, preconceptions, biases and ideologies – often in the same way a lens is used to focus on a subject more clearly.

In this way, much of what is perceived as misinformation is actually truth that is twisted and spun in a persuasive narrative. Sometimes called “malinformation,” this is actually much of what cannot be called a lie, but a distortion of the truth to benefit a narrative or messenger. The problem here is that this is a very subjective area depending on the differing perspectives of the recipient. Drastic measures like censorship routinely run afoul trying to control this type of information.

Finally, while most misinformation is a natural occurrence, it is frequently used as a method of exploitation to push a given narrative or to manipulate the perceptions or decision-making of a given target or target group. The product of deliberate misinformation is disinformation and can take many forms to include omitting certain aspects of the truth in order to tell a biased or one-sided story.

Armed with this perspective, analysts, researchers, and anyone who needs accurate information to conduct their job, can more easily classify the information they receive and in turn develop strategies to more effectively minimize the impact that misinformation will have on their operations.

In a follow-up article, I will discuss differing strategies and why some succeed while others can do more harm than good.